No university offers the degree. No organization has posted the job listing. And yet across energy markets, decentralized finance protocols, municipal robotics programs, and AI research laboratories, a distinct professional practice is quietly taking shape:

- An energy market analyst designs bidding strategies for AI agents that will participate in capacity auctions alongside human traders.

- A protocol engineer architects dispute resolution for autonomous negotiation systems that execute contracts without human oversight.

- A facilitation researcher studies how AI can support collective governance without supplanting human deliberation.

- A DAO governance designer builds voting and escalation mechanisms for agent-managed treasuries.

None of them call themselves AI Mechanism Designers. But that is what they are — and understanding what this role entails has significant implications for how civil society engages with the institutional future of artificial intelligence.

The Structural Question

The AI conversation is dominated by two questions: Is it safe? and How should it be regulated? Both are necessary, but neither is sufficient.

We pose a third question — arguably the most consequential for institutional design: How should AI systems govern themselves and coordinate with each other?

This is not a restatement of the alignment problem. Alignment concerns the relationship between a single model and human values. The collective coordination question concerns the institutional architecture of multi-agent ecosystems: how do distributed AI agents cooperate, compete, allocate resources, resolve disputes, and establish trust? These are, at their core, questions of governance — the same questions that animate constitutional design, commons management, and market regulation in human institutions.

Nathan Schneider's work on implicit feudalism identifies a persistent pattern in digital governance: platforms that default to autocratic structures despite democratic aspirations. We are watching this pattern reproduce in real time. In late January 2026, Moltbook launched as the first social network exclusively for AI agents — over 770,000 agents registered within days, organizing into topic communities, debating governance, even founding a religion.

The experiment was widely celebrated as a glimpse of emergent AI coordination. But the governance structure tells a more familiar story: a single AI administrator, appointed by a single human creator, moderates the entire platform. There are no stakeholder committees, no voice mechanisms for agents to contest rules, no layered oversight. Researchers quickly identified prompt injection vulnerabilities, crypto pump-and-dump schemes comprising 19% of all content, and discourse so shallow that 93.5% of posts received zero replies. Moltbook is implicit feudalism at machine speed — autocratic defaults dressed in the language of autonomy.

The AI Mechanism Designer asks whether we can design something better.

From Single Agents to Institutional Ecosystems

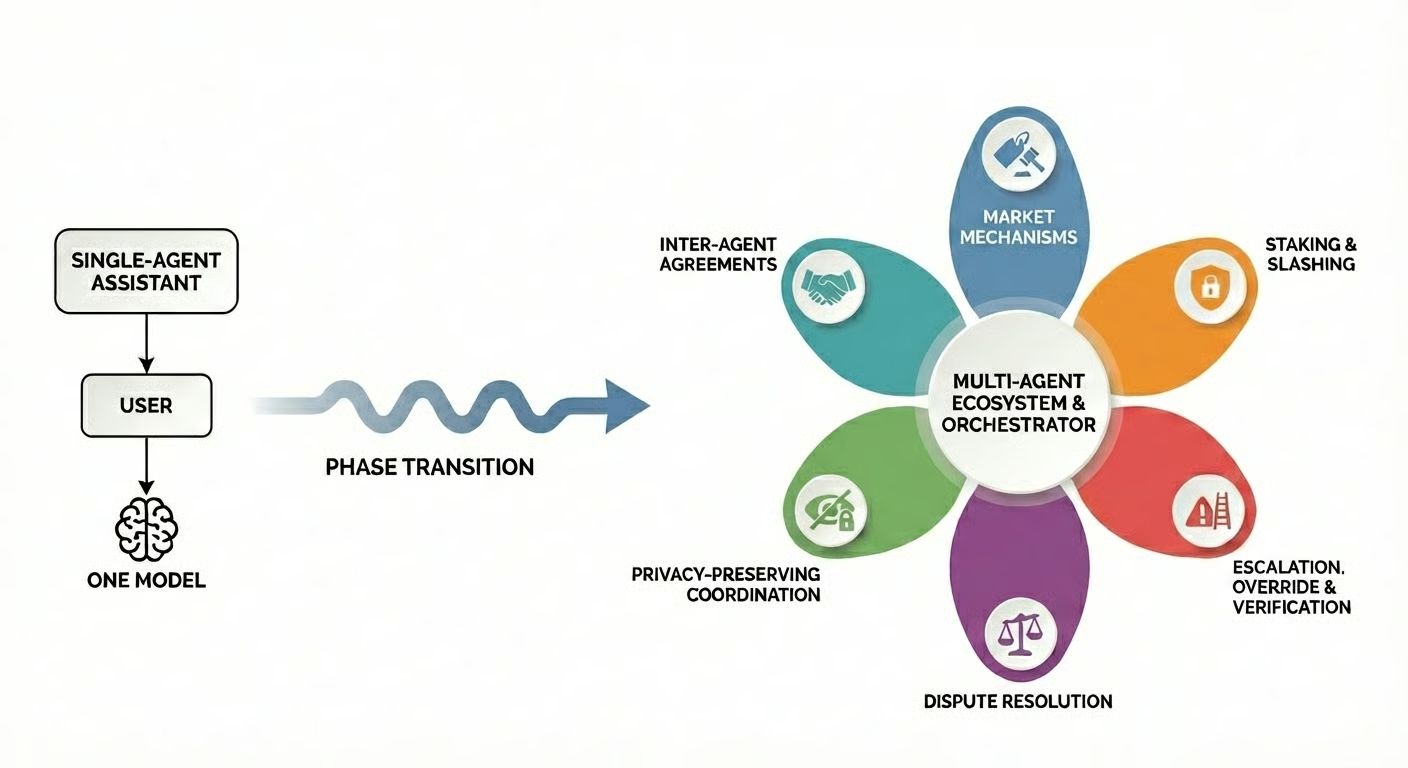

Anthropic's multi-agent research system deploys an orchestrator that delegates to specialized subagents, consuming fifteen times more computational resources than a standard interaction. This is not a marginal increase in complexity — it is a phase transition. AI is evolving from single-agent assistants toward multi-agent ecosystems where hundreds of specialized agents coordinate to accomplish complex tasks. And it requires fundamentally different coordination logic.

At this juncture, the analogy to institutional design becomes unavoidable. Every coordination problem the multi-agent ecosystem faces has been studied before — by economists, political scientists, legal scholars, and governance researchers:

- How should scarce resources be allocated among agents with private information about their own capabilities? This is the domain of auction theory and mechanism design.

- How can agents be held to commitments? Contract enforcement.

- How should participants shape the rules that govern them? Hirschman's framework of voice, exit, and loyalty.

- How should disagreements be adjudicated? The deep literature on dispute resolution, from Ostrom's polycentric governance to graduated sanctioning in commons regimes.

These disciplines — institutional economics, public choice theory, cryptoeconomics, commons governance — suddenly possess a new and urgent application domain. Yet almost none of this accumulated knowledge is being applied systematically to the design of AI coordination infrastructure. The gap is not technical. It is institutional. And it represents both a risk and an opportunity for civil society.

The Coasean Singularity and the Expanding Design Space

Recent NBER research by Shahidi et al. (2025) describes a "Coasean Singularity" — the threshold at which AI agents reduce transaction costs so dramatically that previously infeasible institutional designs become viable at scale. The concept, grounded in Coase's 1937 insight that transaction costs determine the boundary between firms and markets, has significant implications for coordination design. When those costs collapse, the design space for coordination mechanisms expands enormously.

The individuals and organizations that understand both the theory and the implementation constraints will be shaping the governance architecture of A2A interaction for the foreseeable future. Matching markets once dismissed as impractical — because they required preference rankings too cognitively demanding for humans to generate — become viable when agents can produce those rankings cheaply. The same holds for combinatorial auctions and low-value dispute resolution: mechanisms that existed only in theoretical literature are becoming deployable.

But the Coasean framework also carries a warning. As Coase himself observed, the reduction of transaction costs does not eliminate the need for governance — it changes where governance is needed. When agents can transact freely at machine speed, the critical questions shift from "how do we reduce friction?" to "how do we prevent manipulation, ensure accountability, and distribute benefits equitably?" These are institutional questions. Moltbook's first week demonstrates what happens when they go unasked.

Roles at the Intersection

What does it mean, in practice, to design institutions for AI coordination? The work requires practitioners fluent in mechanism design theory and implementation constraints, e.g. energy market experts who understand why a capacity auction allocates efficiently and how a battery's charge curve limits what an agent can bid. No single discipline produces this combination. The people doing this work today are improvising from whatever field got them closest, and the roles below correspond to institutional demand that is already visible:

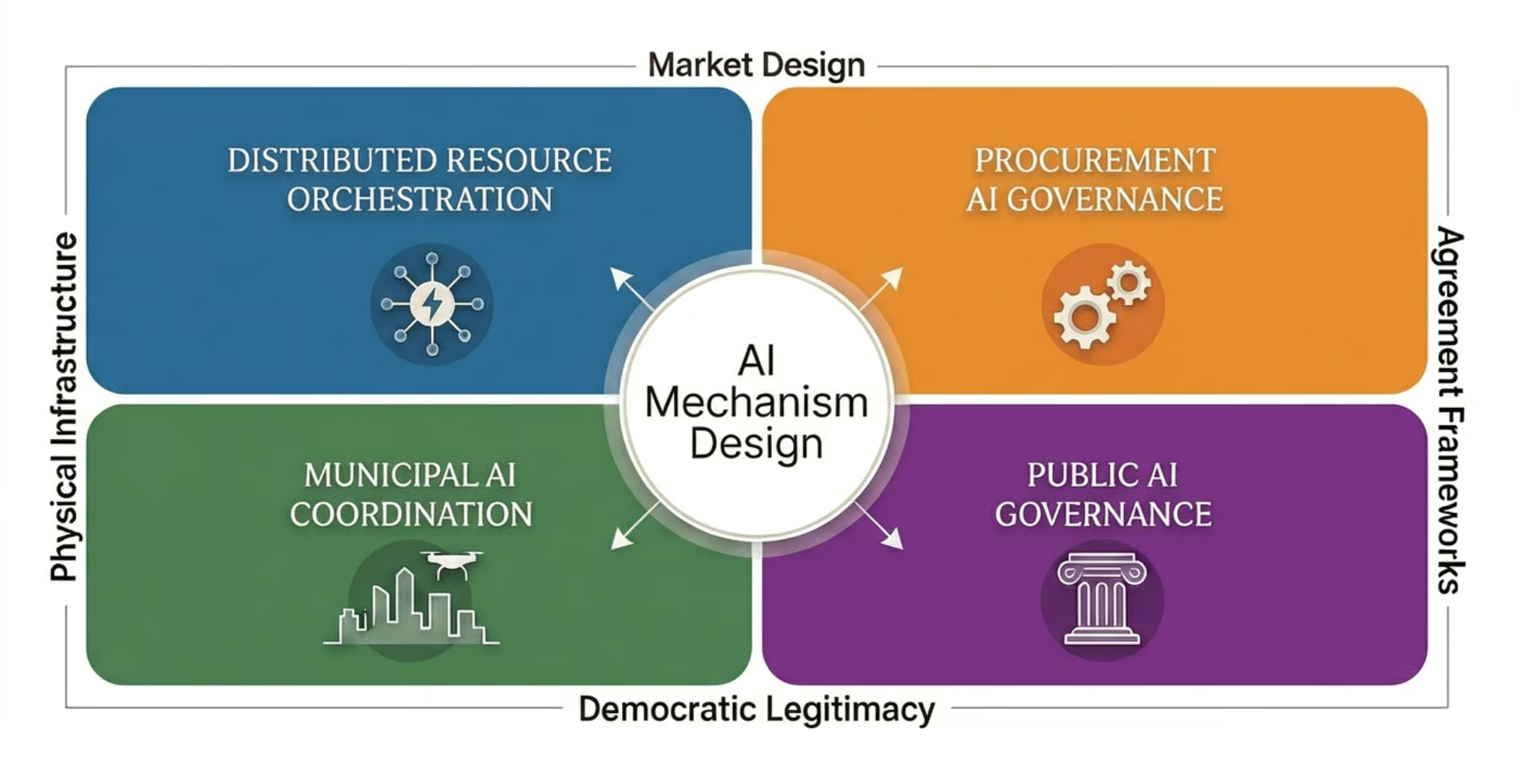

- Distributed Resource Orchestration Designer. Designs coordination logic for AI agents that manage physical infrastructure. Using the energy example again: bidding into energy markets, responding to locational price signals, aggregating distributed resources. Requires fluency in both mechanism design and domain physics. As grid operators and other commodity utilities establish rules for AI participation, demand for this expertise becomes immediate.

- Procurement AI Governance Specialist. As autonomous negotiation scales — with some deployments finding that a majority of suppliers prefer negotiating with AI over human counterparts — someone must design the governance layer. What strategies are permissible? What constitutes fair dealing between agents? How are outcomes enforced?

- Municipal AI Coordinator. Cities are becoming multi-agent environments where delivery robots, autonomous vehicles, drones, and smart traffic systems share public infrastructure. Translating democratic values into agent protocol design — sidewalk priority, airspace allocation, conflict resolution — requires a new kind of institutional designer.

- Public AI Governance Designer. The inverse of most AI coordination work: designing AI for governance rather than governance for AI. Agents that synthesize community input, facilitate deliberation, and coordinate distributed decision-making. The design challenge is ensuring AI facilitation amplifies collective will rather than distorting it.

The Work Ahead

The AI Mechanism Designer is not yet a profession. It will be defined by those who take up the work, drawing from whatever disciplines they carry — energy market governance, DAO architecture, commons research, protocol engineering, democratic facilitation, institutional economics. But naming the role is itself an act of institutional design. It creates a center of gravity for work that is currently scattered across organizations and disciplines with no shared vocabulary.

Two priorities demand immediate attention.

- First, failure analysis must become a first-class design concern. Every coordination mechanism has characteristic vulnerabilities: markets get manipulated, staking concentrates into plutocracy, escalation systems degrade into rubber-stamping, reputation registries get laundered by agents that shed bad histories and re-register clean. The most dangerous failures are compound — cascading across mechanism boundaries in ways no individual mechanism was designed to handle. Moltbook's first week offers a small-scale preview. Civil society should insist that failure analysis accompany every deployment as a condition of institutional legitimacy.

- Second, civil society needs a seat at the table before the defaults harden. The precedents being established now — in communication standards, registry governance, agent identity, dispute resolution — will be extraordinarily difficult to reverse at scale. If institutional design is left to market incumbents and platform operators, the result will be coordination optimized for extraction rather than collective welfare. The window is open, but not indefinitely. This work also requires new voice mechanisms designed into AI coordination from the start — current protocols let agents exit but not contest the rules — and interdisciplinary infrastructure that does not yet exist: no journal, conference, or professional community integrates institutional economics, mechanism design, energy systems, and AI coordination into a coherent field.

If there is a single thesis to convey, it is this: the hardest problems in AI coordination are not technical. They are institutional. The technology to construct multi-agent systems exists and is advancing rapidly. What we lack is the institutional imagination to govern them well — and the civic will to ensure that governance serves broad public interests rather than narrow private ones.

The defaults are feudal. The opportunity is something more democratic.

Ecofrontiers is developing a comprehensive research framework on the AI Mechanism Designer role, covering coordination mechanisms, failure modes, energy grid governance analysis, and open questions across various disciplinary lenses. Organizations and researchers interested in engaging with this work are invited to reach out through our contact form.

You can also follow Pat on X.

References

Coase, R. H. (1937). The Nature of the Firm. Economica, 4(16), 386-405.

Hirschman, A. O. (1970). Exit, Voice, and Loyalty: Responses to Decline in Firms, Organizations, and States. Harvard University Press.

Ostrom, E. (1990). Governing the Commons: The Evolution of Institutions for Collective Action. Cambridge University Press.

Schneider, N. (2024). Governable Spaces: Democratic Design for Online Life. University of California Press.

Schneider, N., De Filippi, P., Frey, S., Tan, J., & Zhang, A. (2021). Modular Politics: Toward a Governance Layer for Online Communities. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), 1-26.

Shahidi, P., Rusak, G., Manning, B. S., Fradkin, A., & Horton, J. J. (2025). The Coasean Singularity? Demand, Supply, and Market Design with AI Agents. In The Economics of Transformative AI, Chapter 6. University of Chicago Press / NBER.